If you’re reading this article, chances are you have already used AI to create content, and you’re wondering whether that’s helping or quietly hurting your Google performance.

I’ve been there.

I’ve tested AI-assisted content across multiple sites. Some pages performed surprisingly well. Others stalled, even though the writing looked “perfect.” Same tools, same prompts, very different outcomes.

That’s what pushed me to look deeper, not at tools, but at where human expertise still matters in the human vs AI content debate, especially through Google’s lens.

This article doesn’t rehash whether AI content is “allowed.” That’s already covered elsewhere. Instead, I’ll focus on where AI consistently falls short, what Google actually seems to reward, and why human involvement matters more as an accountability system than a creativity boost. This is closely tied to how Google evaluates AI content through E-E-A-T signals.

If you want clarity, not hype, you’re in the right place.

Article positioning: how this supports our core E-E-A-T guidance

Before we go deeper, a quick note on how this fits into the bigger picture.

This article builds on our broader work around E-E-A-T for AI-assisted content, but zooms in on the part most teams struggle with: human responsibility.

If you’re looking for the full framework, start with the pillar article, Trusted AI SEO Foundations to understand where human expertise actually makes a difference in practice. This article is the next step.

If you need a deeper understanding of the baseline standards Google expects, this builds directly on the E-E-A-T requirements for AI-assisted content.

What follows is narrower and intentionally practical.

The Real Problem Isn’t AI Content, It’s the Accountability Gap

Here’s the thing most “human vs AI content” articles get wrong.

They frame it as a writing competition.

But Google doesn’t care who typed the words. What it evaluates, indirectly but consistently, is who is responsible when something goes wrong.

Most SERP content oversimplifies the solution as “AI draft + human edit.” I tested that model. On its own, it’s not enough.

What stood out in underperforming pages wasn’t poor grammar or weak structure. It was the absence of clear ownership:

- No obvious expert behind the advice

- No sign that someone actually tested or experienced the thing being discussed

- No clear way to correct or update the content if it turned out to be wrong

That’s the accountability gap.

And this is where human vs AI content stops being about creativity and starts being about trust.

Where AI Content Consistently Fails Google’s Quality Thresholds

When AI content doesn’t rank, or drops after an initial spike, the failure patterns are remarkably consistent.

Over the past year, while reviewing AI-assisted content across a mix of small publishing sites and service pages, the same failure patterns kept showing up.

- Mass-produced synthesis without experience

The content sounds right, but adds nothing new. It reflects the web, not real use. - No verifiable author responsibility

Even with a byline, there’s no evidence the author can stand behind the claims. - Generic confidence without real-world constraints

AI tends to smooth over edge cases, trade-offs, and failures; the very things humans look for when deciding whether to trust content.

How I Validate Quality Without Relying on Traffic Metrics

This is exactly why this site focuses on something more controllable than rankings: process quality. This site is still at an early stage, so I don’t rely on traffic spikes or vanity metrics to judge whether content is “good.”

Instead, I focus on something more controllable: process quality.

Before anything gets published, every page goes through:

- experience checks

- factual validation

- screenshots or examples where needed

- named ownership

- a final human review

Because rankings come later.

Trust signals are what you can control from day one.

Fluent language tricks us into thinking something is authoritative. Google doesn’t fall for that as easily.

This is the core difference between knowledge synthesis and demonstrated experience. One can be automated. The other can’t.

Building these accountability systems early prevents the “mass-produced AI content” problem most new sites run into.

What “Human Expertise” Actually Means to Google (In Practice)

Human expertise, in Google’s ecosystem, isn’t about sounding smart. It’s about signals that imply real responsibility.

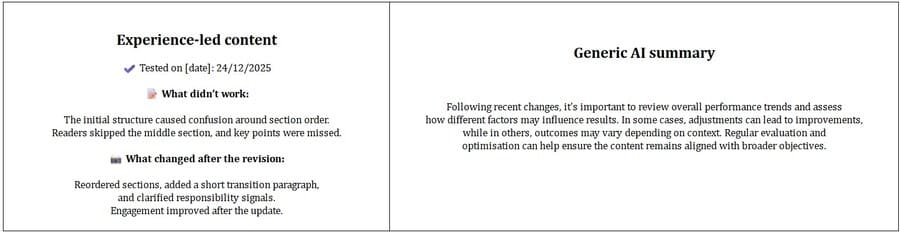

In side-by-side reviews of similar pages on my own site, the ones that held rankings longer consistently shared the same signals:

- First-hand experience documentation

Screenshots, testing notes, real examples, and specifics that are hard to fake. - Named accountability

Clear authorship, reviewers, or editors, not anonymous “team” content. - The ability to correct and update

Pages that show signs of maintenance perform better over time.

This is why “human review” as a vague promise doesn’t move the needle. Without scope and standards, it’s meaningless.

And this applies well beyond high-risk topics. Even non-YMYL content benefits from visible responsibility.

Human Oversight as a Trust System (Not a Creativity Layer)

One of the biggest mindset shifts I had to make was this,

Human involvement isn’t about simply trying to humanize AI content with editing tricks.

This became clear when an AI-drafted page that initially ranked well began slipping weeks later because there was no clear ownership signal to reinforce or update it.

It’s about quality control, risk reduction, and trust signaling.

Pages that hold up long-term tend to have:

- Clear responsibility chains

- Defined editorial standards

- Visible correction and update mechanisms

This is why some lightly edited AI content ranks, and some doesn’t. It’s not the prose. It’s the system behind it.

A Practical Decision Framework: When Human Expertise Is Non-Negotiable

This is the section I wish more articles included.

Here’s the simple decision logic I now use before publishing any AI-assisted content.

Decision logic

AI-first content works when:

- The topic is low risk

- No first-hand experience is implied

- The goal is descriptive, not advisory

AI-assisted, human-led content works when:

- The content includes opinions or comparisons

- Recommendations affect user decisions

- Trust matters more than speed

Human-led content is essential when:

- The topic relies on lived experience

- Brand trust is on the line

- Someone must be able to publicly defend the advice

Quick checklist

Before publishing, I ask:

- Does this content require first-hand use or testing?

- Could inaccuracies cause real harm or loss of trust?

- Is there a named expert who can stand behind this?

If the answer is “yes” to any of these, AI can help, but it can’t lead. At this point, the real question isn’t when to involve a human; it’s what Google actually recognizes as proof of experience.

How Google Sees “Experience”, And Why AI Alone Can’t Show It

Once you know when human expertise is required, the next question becomes what Google actually recognizes as experience.

Experience, as Google evaluates it, leaves traces.

The strongest signals I’ve seen include:

- Testing notes and methodology

- Screenshots or photos from real usage

- Mentions of constraints, failures, or changes over time

A page that claims testing versus one that shows real constraints and decisions feels different to users, and that difference shows up in engagement and trust.

AI can describe these things. It can’t originate them.

That’s where trust breaks if you’re not careful. Simulated experience might read well, but it risks long-term credibility.

Operationalizing E-E-A-T Without Slowing Down Content Production

One fear I hear a lot is that E-E-A-T slows teams down.

In practice, it doesn’t, if you systemize it.

This is the workflow I now use for all AI-assisted content on this site, and it has held up across published pieces without quality-related drops.

The 5-Step System I Use for Every AI-Assisted Article

Over time, I turned this into a repeatable workflow so quality doesn’t depend on guesswork or “editing vibes.”

Every article follows this process:

- Define a clear search intent

- Establish credibility signals (examples, screenshots, first-hand notes)

- Generate with AI responsibly

- Humans edit and fact-check every claim

- Optimize, link internally, and publish

This process is documented inside my AI Content Quality Resource Bundle, which includes the playbook, publishing checklist, and tool selection criteria I use internally.

The goal isn’t to make AI “sound human.” It’s to make every page accountable.

What matters is what you document:

- Who reviewed what

- What was verified

- When it was last updated

This doesn’t need to be public on every page. But it needs to exist.

This is the kind of human-in-the-loop content workflow that lets teams scale AI-assisted content without sacrificing trust or accountability.

Done right, this avoids the “mass-produced content” signals Google flags.

Common Mistakes Brands Make When Relying on AI Content

These are patterns I keep seeing when reviewing scaled AI content on growing sites.:

- Treating human review as cosmetic

- Scaling content before trust signals are established

- Avoiding transparency around AI use

- Publishing without clear ownership

In most cases, the issue wasn’t AI usage; it was that no one owned corrections once the content was live.

None of these fails immediately. That’s what makes them dangerous.

They show up later, as stalled rankings, reduced engagement, or quiet trust erosion.

FAQs

Can AI content rank on Google without human involvement?

– Yes, I’ve seen AI-only pages rank for months. But they tend to lose ground once competing pages add clearer authorship or fresher, experience-based examples.

What does Google mean by “Experience” in E-E-A-T?

– Evidence of first-hand use, testing, or lived involvement, not polished summaries.

Can AI-generated content meet E-E-A-T standards if reviewed by an expert?

– Yes, if the review has real scope and responsibility, not just surface edits.

Can scaling AI content too fast hurt rankings?

– It can, especially if the content lacks differentiation or ownership.

Should brands disclose AI assistance to protect trust?

– In many cases, yes, especially where users would reasonably care how content was created.

What tools do you use for AI writing safely?

– I only use tools that support long-form structure, factual grounding, and strong editing workflows.

Instead of recommending random tools, I documented my evaluation criteria inside my AI Content Quality Resource Bundle, which includes the tool selection checklist, publishing workflow, and quality standards I follow.

Because most AI content problems come from poor tool choices, not AI itself.

Conclusion

Humans Aren’t Competing With AI, They’re Accountable for It

The human vs AI content debate misses the point.

Humans aren’t here to outwrite machines. They’re here to take responsibility for what gets published.

That responsibility, experience, ownership, and correction are what turn AI output into something Google and users can trust.

Free Resource: My AI Content Quality Bundle (Playbook + Checklists + Templates)

To make this practical, I packaged everything I use internally into one simple download.

Inside the bundle, you’ll get:

- The AI-Ready Content Quality Playbook

- The publishing quality checklist

- The AI writing tool selection checklist

- a simple content brief template

These are the exact systems behind every article on this site.

If you’re working with AI-assisted content, this gives you a ready-to-use process instead of guessing what “human review” means.

3 practical templates. 1 download. No email required.

👉 Download the full AI Content Quality Bundle

If there’s one takeaway, it’s this:

Choose your content approach based on risk and workflow, not hype. This perspective is grounded in Trust-first AI SEO foundations, where accountability and quality come before scale.

And if you want to understand why Google frames quality this way in the first place, the official helpful content guidance from Google is still worth reading alongside your internal standards.

I’m using this exact approach on my own site, and every new piece here goes through the workflow outlined above.