Introduction

If you’re using AI to help create content, you’ve probably felt this tension already.

The draft looks decent. It reads smoothly. It even sounds confident. But something in the back of your mind says, “Should I really publish this as it is?”

Last quarter, I caught an AI draft confidently citing a “2019 industry study” to justify a recommendation. When I traced it back, the study was from 2015, and said the opposite. That one line would have slipped through if I hadn’t stopped to verify it.

When I first started reviewing AI-assisted drafts seriously, I noticed a pattern. The biggest problems weren’t grammar or tone. They were subtle issues: misleading claims, shaky facts, invented examples, or advice that sounded right but didn’t fully hold up once you checked it properly.

That’s where things get risky, not just for SEO, but for trust.

Over time, I stopped relying on gut feeling and built a simple, repeatable AI content review checklist that I run through before hitting publish. It’s not about making AI content “sound human.” It’s about making sure what you publish is accurate, responsible, and defensible.

In this article, I’ll walk you through that checklist step by step. I’ll show you what I actually check, what tends to break most often, and how to turn this into a reliable approval habit, whether you’re working solo or with a team.

This is not about AI tools, detection, or optimization tricks. It’s the final editorial gate before content goes live.

Before we talk about where this fits in the bigger picture, let’s ground this in a simple reality: most AI drafts fail at the final review step, not the writing step.

How This Checklist Supports the Trusted AI SEO Pillar

Before we go any further, I want to be clear about where this article fits.

On Trusted AI SEO, the broader pillar focuses on principles: how trust, accuracy, and responsibility shape long-term SEO performance. This article is narrower. It’s about execution.

Think of it this way:

- The pillar explains why trust matters and what high-quality AI-assisted content looks like.

- This article shows how I check an AI draft right before publishing to make sure it actually meets those standards.

If you want the strategic foundation behind this approach, I recommend reading the Trusted AI SEO Foundations pillar first. This checklist is how those principles show up in day-to-day publishing decisions.

Just as important, this article deliberately avoids a few things:

- No tool comparisons

- No AI detection discussion

- No deep SEO optimization tactics

This is the last-mile editorial review; the moment where you decide whether content is safe, accurate, and ready for real readers.

When You Should Use an AI Content Review Checklist

Not every AI draft needs the same level of scrutiny. Short opinion pieces or personal updates usually carry low risk. The problem is that many formats that feel safe, such as how-to guides, listicles, and comparison posts, often hide factual claims that quietly need verification.

You should treat a formal review checklist as non-negotiable when:

- The content includes statistics, dates, or factual claims

- The topic could influence decisions (health, finance, business, legal, safety)

- The draft references studies, experts, or external authorities

- The content will represent your brand publicly over the long term

Where people often go wrong is assuming that “human-reviewed” automatically means “safe.” In reality, casual editing and structured review are very different things.

A checklist forces you to slow down and answer specific questions:

- What claims am I actually making?

- What evidence supports them?

- Who is accountable if something is wrong?

That’s why I treat this checklist as a publish gate, not a nice-to-have.

The Core AI Content Review Checklist (Pre-Publish)

At this stage, most reviewers focus on tone and flow. What they often miss are repeating claim patterns: statistics, comparisons, and implied causality, that quietly introduce accuracy risk.

When I review an AI-assisted draft, I move through these checks in order. I’ve found that skipping steps almost always comes back to bite later.

Accuracy & Claim Verification Checks

The first thing I do is separate opinions from claims.

AI often blends accurate information with fabricated details in ways that can appear natural at a glance, which is why verification is necessary; something reinforced by Google’s guidance on AI-generated content. That’s exactly why this step matters.

I scan the draft and flag:

- Statistics and percentages

- Dates, timelines, and historical references

- Cause-and-effect statements

- Comparisons (“X is better than Y because…”)

Once flagged, every claim must pass a simple rule: no source, no publish.

If I can’t trace a claim back to a reliable source, I either:

- Verify it manually and add a source, or

- Remove or reframe it as an opinion

This alone catches most hallucinations.

Source Quality & Evidence Standards

Not all sources are equal, and AI doesn’t understand that nuance by default.

During review, I ask:

- Is this a primary source or a secondary summary?

- Is the source current enough for this topic?

- Does it actually support the claim being made?

What I see most often is AI pulling from SEO-optimized listicles that cite each other in loops, or “industry reports” that turn out to be lead-gen whitepapers with selective data. On SaaS and marketing topics, especially, these sources look authoritative at a glance but rarely hold up under closer inspection.

My rule is simple:

- High-impact claims need primary or authoritative sources.

- If multiple sources disagree, I acknowledge the uncertainty instead of pretending there’s consensus.

I also add: “last verified” context for time-sensitive information. That small step makes future updates much easier.

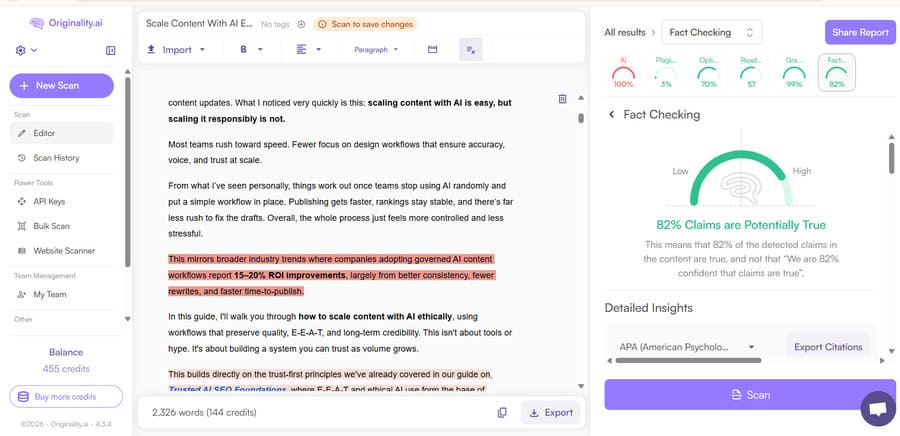

Hallucination & Fabrication Risk Checks

Even when sources look reasonable on the surface, AI can still invent details entirely, which is a different failure mode.

This is where AI tends to fail quietly.

Hallucinations rarely look ridiculous. They look confident.

I’ve learned to watch for patterns:

- Named experts without clear credentials

- Studies mentioned without publication details

- Overly precise numbers that aren’t widely cited

- Quotes that sound polished but oddly generic

One draft I approved early on cited “Dr. Sarah Chen from Stanford” to support a content strategy claim. A reader emailed us a week later, and there was no such person. Since then, I Google every named expert before approving a draft, no matter how plausible the reference sounds.

When I spot these, I don’t argue with the draft. I verify or delete.

If something can’t be confirmed, it doesn’t stay. No exceptions.

This approach works far better than relying on tools or intuition alone.

Editorial Quality & Clarity Review

Once I trust the facts, I switch hats and review as an editor.

This is where the AI editorial checklist comes into play.

I look for:

- Repetition that adds length but not value

- Generic advice that could apply to any topic

- Sections that summarize common knowledge without adding insight

Once the draft is factually safe, the next step is to optimize AI content for Google with stronger structure, clearer intent alignment, and refined headings.

For example, I’ll often see a paragraph like: “It’s important to note that many businesses can benefit from AI when used correctly.” I’ll either cut it entirely or replace it with a concrete observation tied to the article’s context. If a sentence doesn’t add a specific insight, example, or decision point, it doesn’t stay.

If you want deeper guidance on improving voice and expertise at this stage, linking to Humanize AI Content: Practical Steps to Add Expertise & Voice fits naturally here.

Trust, Transparency & EEAT Signals

In practice, this means every published piece includes a named author, a clear “Reviewed by” line when AI is involved, and a visible last-updated date.

For higher-risk content, we also document what was reviewed (facts, sources, claims) and how readers can flag errors.

I don’t re-explain EEAT here. Instead, I implement it.

That means proper attribution, reviewer context, and a clear editorial trail. If you need the conceptual framework behind this, referencing E-E-A-T for AI Content supports this section without duplication.

Once these trust signals are clear, the next step is making sure they’re applied consistently, especially when more than one person is involved.

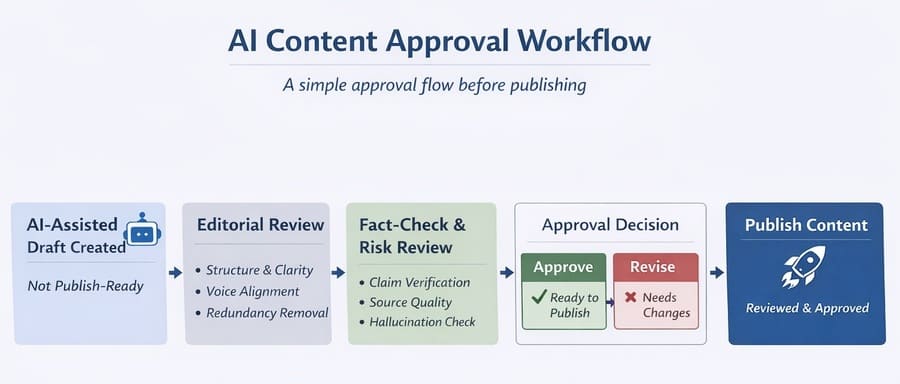

Turning the Checklist Into an AI Content Approval Process

A checklist only works if it fits into your workflow.

For solo creators, this can be as simple as a final self-review before publishing. For teams, it becomes an AI content approval process with defined roles.

Here’s what I’ve seen work:

- Editor checks structure, clarity, and completeness

- SME (when required) verifies technical accuracy

- Publisher gives final sign-off

The key is clarity. Everyone should know what they’re responsible for and when content is blocked from publishing.

Risk-Based Review: When the Checklist Is Not Enough

Not all content should pass through the same gate.

Over time, I started using a simple risk filter:

- Does this content influence decisions that could cause harm?

- Are the claims novel or widely accepted?

- Is the audience likely to act on this advice?

If the risk is high, the checklist alone isn’t enough. That’s when I escalate the review or delay publishing.

For deeper post-publish checks and accountability, follow a structured AI content audit process before scaling or updating AI-assisted pages.

Common Mistakes Teams Make When Reviewing AI Content

The most damaging mistakes I see aren’t technical; they’re procedural. Two come up repeatedly in real publishing workflows.

- Treating a review as proofreading

- Trusting citations without opening them

In one internal audit I ran, nearly 40% of cited sources were either broken links, paywalled abstracts no one had read, or secondary summaries misrepresenting the original data. The content looked “reviewed,” but no one had actually verified the claims.

The biggest mistake, though, is assuming AI drafts are neutral. They’re not. They reflect patterns, assumptions, and sometimes errors from their training data.

A checklist exists to counterbalance that.

FAQ: AI Content Review & Approval

These are the most common questions I hear when teams start formalizing their AI review process.

Who should review AI-generated content before publishing?

– At a minimum, someone accountable for accuracy and clarity. For higher-risk topics, this includes a subject matter expert.

Does Google penalize AI content if it’s reviewed by humans?

– No. Google evaluates content based on quality, usefulness, and trust signals—not whether AI was involved. What matters is that AI-assisted content is reviewed, accurate, and created to help users rather than to manipulate search rankings.

How detailed should fact-checking be?

– For low-risk posts, I verify statistics and named claims. For YMYL topics, I verify every factual statement against primary sources and won’t publish case studies without direct confirmation from the company or individual mentioned.

Is a checklist enough for YMYL topics?

– Usually not. A checklist is a starting point, not a replacement for expert review.

Should AI-assisted content disclose AI usage?

– Disclosure isn’t always required. However, for YMYL or high-impact content, clearly indicating human review or editorial oversight helps establish accountability and trust, especially when readers may act on the information.

Final Pre-Publish Decision: Is This Content Safe to Publish?

After reviewing hundreds of AI-assisted drafts, the most consistent risk I see is AI confidently filling knowledge gaps with plausible-sounding connective tissue. The writing feels complete, but the facts underneath often aren’t.

AI makes publishing easier, but it also makes publishing carelessly easier.

This AI content review checklist is my way of slowing things down just enough to protect accuracy, trust, and long-term credibility. It’s not about perfection. It’s about responsibility.

If the content passes this review, I publish confidently. If it doesn’t, I fix it or hold it back.

That mindset: publish when ready, not when rushed, has mattered more for long-term results than any tool or shortcut I’ve tested.