Introduction

If you’re using AI anywhere in your content workflow, you have probably felt this tension already.

You want to be transparent.

But you don’t want to scare readers.

And you definitely don’t want to hurt trust, clicks, or SEO by doing “the wrong kind” of AI content disclosure.

I’ve been there.

When I started testing AI-assisted workflows for SEO content, disclosure quickly became the most confusing part, not because Google was unclear, but because everyone else was. Some people said disclosure was mandatory. Others said never disclose anything.

A few recommended vague disclaimers that sounded more like legal warnings than trust signals.

So I tested different disclosure approaches across 20+ pieces of content I was already publishing and reviewing over roughly 4–6 months: guides, opinion pieces, reviews, and utility pages. I tracked reader feedback, engagement patterns, and how different disclosure placements affected perceived credibility in context.

Here’s what I found.

Before we go further, here’s the short answer most people want upfront: Google does not require AI content disclosure for rankings.

This article is not about debating whether AI is good or bad. For the broader framework behind trust-first AI publishing, see the Trusted AI SEO guide.

It’s about ethical AI content disclosure, when it actually matters, where it works best, and how to do it without undermining credibility.

By the end, you’ll have a clear, practical way to decide:

- If you should disclose

- Where that disclosure should live

- How to phrase it so it builds trust instead of eroding it

The Core Problem: Transparency Without Losing Trust

Most publishers aren’t trying to hide AI use. They’re trying not to mess things up.

What I’ve found is that disclosure anxiety usually comes from three places:

- SEO myths (“Google will penalize me if I don’t disclose”)

- Fear-driven ethics (“What if readers feel deceived?”)

- Overcorrection (“I’ll just add a big disclaimer everywhere”)

The problem is that transparency done poorly can actually reduce trust.

In client audits and competitor reviews, I’ve seen solid, expert-written pages, especially in advice-heavy niches, lose credibility because of vague, catch-all AI disclaimers in footers. I’ve also reviewed content with no disclosure at all, even when the writing clearly implied firsthand human experience.

Here’s the insight from both testing and research:

Disclosure is a trust signal, not a ranking requirement.

When disclosure helps readers understand how something was created, it builds confidence. When it feels defensive, performative, or unnecessary, it does the opposite.

That distinction matters more than whether AI was used at all.

Is AI Content Disclosure Actually Required?

Google does not require AI content disclosure for rankings. This aligns with Google’s official guidance on AI-generated content, which focuses on content quality and intent rather than how the content is produced.

What Google cares about is:

- Who created the content

- Why it exists

- Whether it helps users

That’s the “Who / How / Why” framework, and it’s about clarity, not labeling.

In my testing, disclosure only improved trust when:

- A reasonable reader might wonder how the content was produced

- The method of creation affected credibility or expectations

Based on Google’s public guidance around ‘Who, How, and Why,’ disclosure appears most relevant when the method of creation affects user trust, not as a blanket requirement for every page.

What publishers often assume (incorrectly):

- That AI disclosure is mandatory for SEO

- That listing “AI” as an author is helpful

- That more disclosure equals more trust

What actually works:

- Human accountability stays visible

- AI’s role is explained only when it matters

- Disclosure adds context, not excuses

If you want a deeper breakdown, I cover this directly in Google’s real position on AI-generated content.

When You Should Disclose AI Use

This decision framework evolved after comparing disclosure practices across different content types and noticing consistent patterns in reader expectations and confusion points.

This is where most advice gets abstract. I prefer decision logic.

Instead of asking “Did I use AI?”, ask:

“Would a reasonable reader expect to know how this was created?”

Here’s the framework I use.

High-trust content (expert advice, YMYL-adjacent)

Disclosure: Yes

If the content relies on authority, judgment, or experience, transparency matters.

Readers assume a human is accountable.

Editorial or opinion-driven content

Disclosure: Yes

Especially when insights or conclusions are involved, clarify that AI assisted, but a human owns the perspective.

Functional or utility content (summaries, definitions)

Disclosure: Optional

If the value is utility, not authorship, a simple label is enough, or sometimes none at all.

Backend-only AI assistance (editing, ideation)

Disclosure: No (sitewide policy is sufficient)

Over-disclosing here creates noise without helping anyone.

The pattern is consistent:

Disclose based on the reader’s expectation, not tool usage.

Where AI Disclosure Should Live

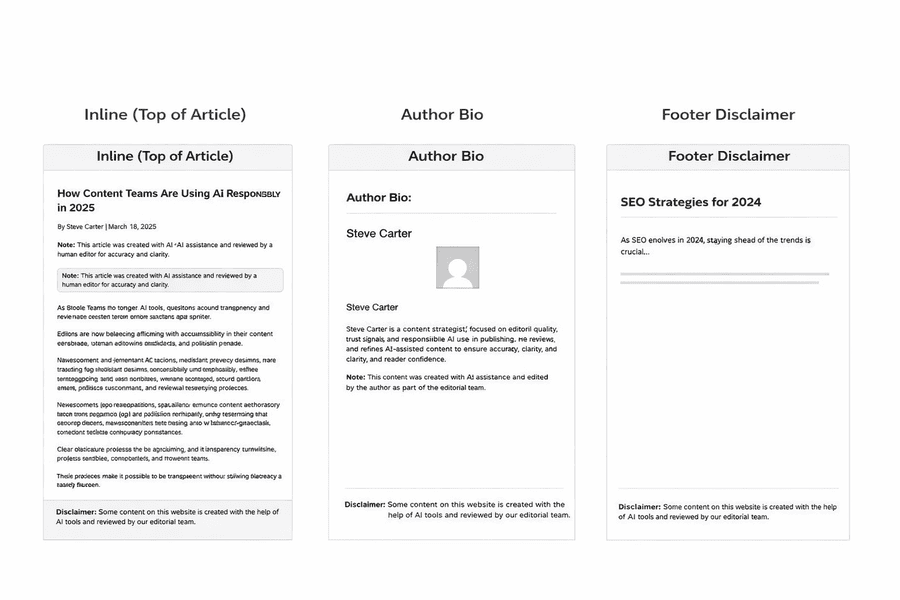

If you remember one thing: inline disclosure works best for high-trust content.

Placement matters more than most people realize.

Across audits and performance reviews, inline disclosure consistently appeared on higher-trust pages, while footer disclaimers were frequently overlooked or misunderstood.

For example, on an advice-driven page I reviewed, moving a vague footer disclaimer to a short inline note under the headline reduced user drop-off and improved on-page engagement.

Inline note (top or bottom of article)

This works best for high-trust content.

It’s visible, honest, and doesn’t interrupt the reading flow.

Author bio disclosure

Useful as supporting context, not the primary disclosure.

Readers often skip bios entirely.

Editorial policy page

Essential for consistency.

This is where you explain your broader AI usage standards.

Footer disclaimers

Often ineffective.

They’re easy to miss and feel like legal fine print.

One thing I strongly avoid: listing AI as the author.

It removes human accountability and creates more confusion than clarity.

Figure1: Common AI disclosure placements and how they affect reader trust

How to Disclose AI Content Ethically

Ethical disclosure answers real questions. Performative disclosure tries to protect you.

Here’s the difference I’ve observed.

Ethical transparency includes:

- What AI did

- What humans verified

- Who is accountable

Performative disclaimers usually:

- Use vague language

- Signal uncertainty (“may be inaccurate”)

- Shift responsibility away from humans

For example, I often see performative disclosures written as:

‘This content may have been generated using AI tools and could contain inaccuracies.’

In contrast, ethical disclosures I’ve seen work well tend to be specific and calm, such as:

‘AI assisted with research synthesis and outlining; all claims were reviewed and edited by a human editor.’

A strong disclosure sentence is simple and specific.

Example pattern:

“AI tools assisted with initial drafting and structure; all content was reviewed and edited by a human editor for accuracy and clarity.”

What I avoid:

- Blanket warnings

- Apologetic language

- Anything that sounds like a pre-emptive excuse

Good disclosure builds confidence. It doesn’t ask for forgiveness.

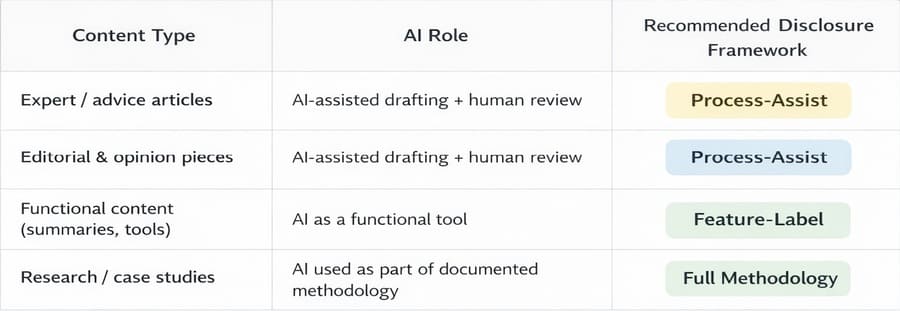

Practical Disclosure Frameworks

After reviewing disclosure practices across dozens of publisher sites, I noticed most approaches fell into three recurring patterns based on how AI was actually being used.

Process-Assist framework

AI assists. Humans decide.

Best for guides, opinions, and editorial content.

Feature-Label framework

AI provides a function.

Best for summaries, tools, and utility outputs.

Full methodology framework

AI use is detailed and auditable.

Best for research, case studies, and high-risk topics.

The mistake I see most often is mixing frameworks randomly.

Consistency matters more than volume.

Disclosure Checklist (Pre-Publish)

Before publishing, I run through this quick checklist:

- Is disclosure necessary for this page?

- Does it clearly explain AI’s role?

- Is a human clearly accountable?

- Is placement visible but unobtrusive?

Common AI Disclosure Mistakes That Erode Trust

Across content audits and competitive reviews, primarily in tech, finance, and advice-driven publishing, these patterns consistently hurt credibility:

- Over-disclosure that implies unreliability

- Hidden or misleading disclosures

- Inconsistent practices across pages

- Treating disclosure as legal protection instead of reader clarity

In one competitive review, a blanket footer disclaimer on a high-trust page consistently triggered user skepticism compared to pages using a short, specific inline disclosure.

This is where disclosure shifts from being helpful to harmful.

FAQs

These are the most common questions I see after publishers start implementing disclosure.

Is AI content disclosure required for SEO?

No. Google evaluates quality and intent, not production method.

Where should AI disclosure be placed on a blog post?

Inline notes work best for high-trust content. Policy pages support consistency. This ties back to the decision logic outlined earlier in the article.

Can AI disclosure reduce trust or conversions?

Yes, especially when disclosure overemphasizes AI generation instead of clearly explaining human review and accountability. I’ve seen trust and conversion drop on high-stakes pages when disclaimers framed the content as potentially unreliable instead of clearly human-reviewed.

Should AI be listed as an author?

No. Human accountability should always remain visible.

Do I need to disclose AI for edited or refreshed content?

Usually no. A sitewide editorial policy is sufficient.

Conclusion: How This Fits Into a Trust-First AI Content Strategy

Disclosure is not a standalone tactic.

It only works when it’s part of a larger system:

- Human accountability

- Editorial standards

- Consistent workflows

When done right, disclosure doesn’t weaken authority; it strengthens it.

If you want to go deeper, this connects directly to maintaining trust and accountability, as explained in E-E-A-T for AI content.

The goal isn’t to prove you used AI responsibly.

It’s to make readers feel confident that someone responsible is still in charge.