Introduction: Scaling Content Fast Without Breaking Trust

If you’re trying to scale content with AI right now, you’re probably feeling two things at once.

On one hand, AI makes publishing faster than ever. On the other hand, there’s this constant fear in the background: What if this hurts quality? What if Google stops trusting my site? What if readers can tell this is AI-heavy content?

I’ve been there.

Over the past year, I’ve tested AI-assisted workflows across blog content, SEO pages, and content updates. What I noticed very quickly is this: scaling content with AI is easy, but scaling it responsibly is not.

Most teams rush toward speed. Fewer teams focus on designing workflows that ensure accuracy, voice, and trust at scale.

From what I’ve seen personally, things work out once teams stop using AI randomly and put a simple workflow in place. Publishing gets faster, performance remains consistent, and there’s far less pressure to rush fixes at the draft stage. One thing that surprised me was how much easier editorial reviews became once expectations were clearly defined upfront.

This also aligns with broader industry observations, where many teams report ROI improvements after adopting AI workflows, mainly driven by efficiency gains, reduced rework, and faster publishing cycles.

In this guide, I’ll walk you through how to scale content with AI ethically, using workflows that preserve quality, E-E-A-T, and long-term credibility. This isn’t about tools or hype. It’s about building a system you can trust as volume grows.

This builds directly on the trust-first principles we’ve already covered in our guide on Trusted AI SEO Foundations, where E-E-A-T and ethical AI use are positioned as core signals for long-term content credibility.

What “Scaling Content With AI” really means

Here’s the thing most articles get wrong.

Scaling is not mass production. It’s about increasing output without sacrificing quality, accuracy, or trust.

When people talk about scaling content with AI, they often mean publishing more pages faster. But real scaling isn’t about volume alone.

True scaling means:

- Consistent publishing without quality compromise

- Reliable accuracy across hundreds of pages

- A recognizable brand voice and tone, even with AI assistance

- Editorial control that is consistent even under volume

When I tested aggressive AI-only workflows, output went up along with the problems. Duplicated phrasing, superficial explanations, and small inaccuracies became easy to miss.

Why teams want to scale content with AI

There’s a reason this topic keeps coming up:

- Smaller teams want to compete with large content operations

- SEO requires broader topic coverage than before

- Content refresh cycles are getting shorter

- Budgets aren’t growing at the same pace as content needs

AI does help here, but only if it’s used responsibly.

Why ethical AI content creation matters

At scale, small mistakes multiply.

Without proper controls, AI content can lead to:

- Hallucinated facts

- Inconsistency in Brand Voice

- Weak E-E-A-T

- Reader trust breakdown

- Increased editorial cleanup later

Ethical AI content creation isn’t about being nice. It’s about protecting your site from long-term damage.

Google has also clarified that the use of AI itself isn’t the issue—what matters is whether content is helpful, accurate, and people-first, as outlined in its guidance on AI-generated content.

If you’ve noticed AI drafts sounding technically correct but emotionally flat, this is the same issue I broke down in Humanize AI Content, where tone and experience often get lost at scale.

Common Mistakes When Teams Scale Content With AI

Before we talk solutions, it’s important to call out what usually goes wrong.

From my own testing and reviewing other sites, these issues come up repeatedly:

- Over-automation of judgments

Letting AI decide angles, claims, or conclusions without human review. - Publishing without fact-checking

Especially dangerous for SEO content that includes statistics or advice. - Ignoring subject-matter expertise

AI drafts replace real experience instead of supporting it. - No automation boundaries

Everything gets automated—whether it should or not. - Inconsistent voice across pages

Readers notice, but algorithms don’t immediately. - No review ratio

Teams don’t define how much content must be reviewed by humans.

This is exactly why I created a structured AI Content Quality & E-E-A-T Checklist—to make sure speed never replaces judgment.

Once you see these patterns, you need a structured, ethical workflow, not just better prompts.

Ethical Scaling Framework

This is the framework I’ve refined while working with AI-assisted content. It’s designed to scale output without sacrificing trust. To make this framework easier to apply, I often think about it as a phased rollout rather than a one-time switch:

- Weeks 1–2: Strategy & governance (define boundaries, audit existing content, set disclosure rules)

- Weeks 3–4: Tool selection and workflow design (research, drafting, review checkpoints)

- Weeks 5–6: Pilot content run (small batch, full review, performance validation)

- Weeks 7+: Gradual scaling with defined HRR and safeguards

This phased approach reduces risk early and prevents teams from scaling broken processes.

Stage 1: Strategy & Governance (Before You Scale)

This stage prevents most problems later.

Before using AI at scale, I define:

- Automation boundaries

What AI can assist with vs. what stays human-only (final claims, advice, conclusions). - Topic risk tiers

High-risk topics (health, finance, legal) require deeper review. - Human-in-the-loop rules

Every piece must pass through a defined human checkpoint. - Disclosure approach

How AI assistance is acknowledged transparently.

I recommend documenting a clear disclosure statement that sets expectations without undermining trust. A simple example I’ve used successfully is:

“This content was created with the assistance of AI tools and reviewed by a human editor for accuracy, clarity, and relevance.”

This level of disclosure is clear, honest, and aligned with current SEO ethics guidance—without over-explaining or drawing unnecessary attention to the tooling.

Skipping this stage is the fastest way to lose control.

Before scaling anything new, I also recommend starting with a content audit using an E-E-A-T-focused checklist. Auditing existing pages first helps identify where accuracy, experience, or trust signals are already weak—so you’re not scaling problems along with output. In practice, this step sets a baseline for quality and makes later AI-assisted scaling far safer.

Stage 2: Research & Planning With AI

AI is extremely effective here when used correctly.

I use AI for:

- SERP analysis and intent mapping

- Keyword clustering and content gaps

- Competitor pattern recognition

- Outline structuring

But final decisions, positioning, and prioritization stay human-directed.

This keeps AI in a supporting role, not a decision-maker.

Tool choice matters here, which is why I recommend reviewing How to Choose the Right AI Writing Tool before locking yourself into a single platform.

Stage 3: Ethical Drafting (AI-Assisted)

This is where most teams go wrong.

I let AI generate:

- Structured drafts

- Section outlines

- Supporting explanations

But I require:

- Manual review of all claims

- Forced originality checks

- Human rewriting of intros and conclusions

- Brand-voice edits before optimization

AI speeds up drafting. Humans protect meaning.

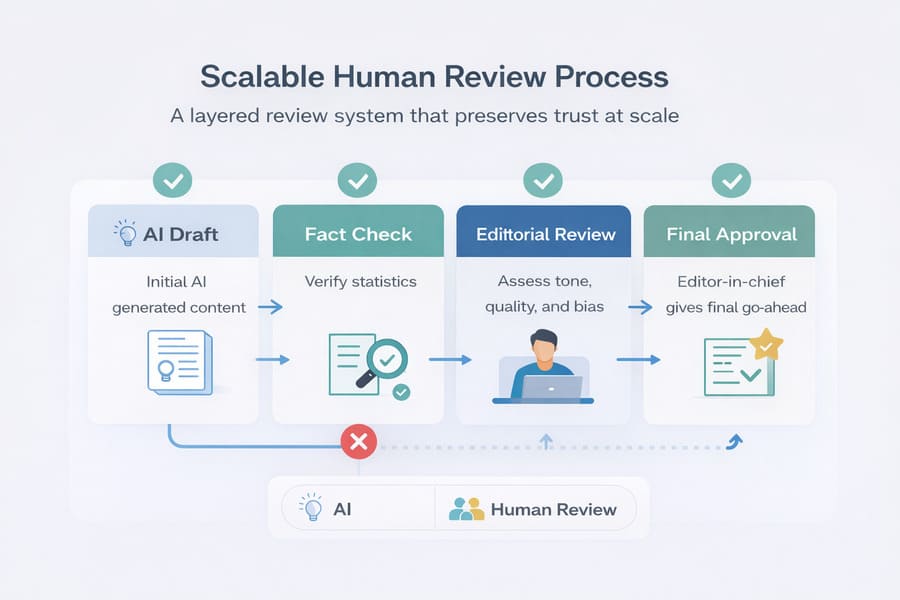

Stage 4: Scalable Human Review

At scale, review systems must be intentional.

My review workflow includes:

- Editorial accuracy checks

- Bias and tone review

- Fact verification for statistics and examples

- Human Review Rate (HRR)

A set portion of content is thoroughly reviewed, not just quickly checked.

To make HRR actionable, I use a simple risk-based model rather than a generic rule:

- High-risk topics (health, finance, legal, YMYL) → 100% human review

- Medium-risk topics (SEO strategy, tool comparisons, process guides) → 30–50% review

- Low-risk topics (definitions, basic explainers, internal updates) → 10–20% review

This approach keeps review effort proportional to risk, so quality doesn’t collapse as volume increases.

This is what keeps quality stable as volume grows.

Stage 5: Compliance, Authenticity & Publishing

Before publishing, I ensure:

- Clear author attribution

- Proper citations wherever required

- Transparency around AI assistance

- SEO optimization without rewriting the meaning

- Update tracking for freshness

At this stage, I use the same optimization rules, focusing on structure and search intent—without changing the original meaning just to please tools.

This is where ethical AI content production becomes visible to readers—not hidden.

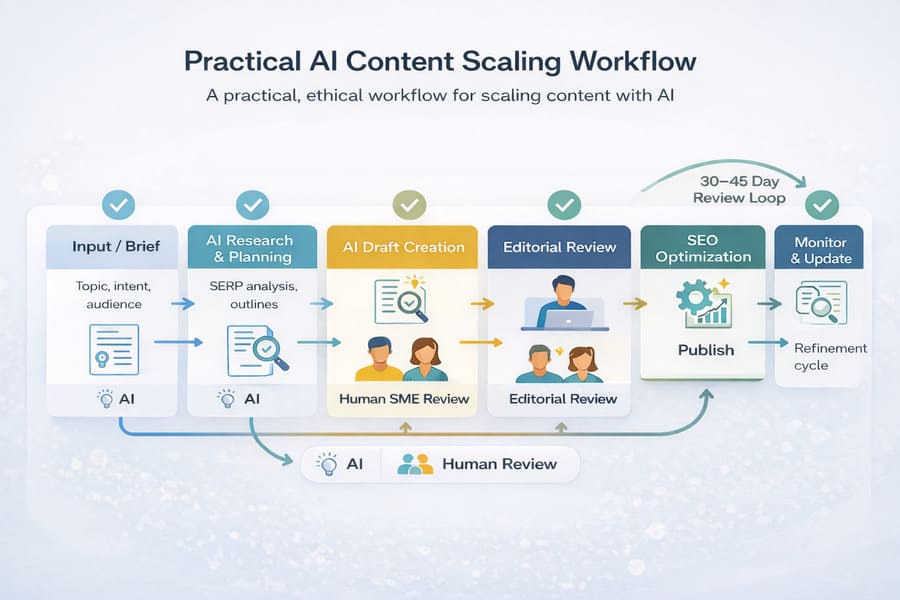

Step-by-Step Scaling Workflow

Here’s how this looks in practice for a single blog post.

Workflow overview:

Input → Research → AI Draft → SME Review → Editor Review → SEO Optimization → Publish → Monitor

Step-by-step:

- Define intent and audience problem

- AI-assisted SERP and outline generation

- AI produces a structured draft

- Subject-matter input added

- Editor refines tone and clarity

- SEO tools adjust structure — not meaning

- Final trust check and publish

- Review performance after 30-45 days

This workflow scales cleanly across teams.

Trust Safeguards for Scaled Content

From experience, these safeguards matter most:

- Hallucination detection

- Plagiarism checks

- Brand-voice consistency checks

- Bias review for sensitive topics

- Legal and compliance review, where applicable

- Multi-model validation for critical content

In practice, this means cross-checking critical sections with two different AI models. For example, draft with one model and validate claims or summaries with another.

I reserve this for high-impact content—statistics, recommendations, or conclusions—where hallucinations or bias would be costly. When multiple models agree, confidence increases; when they diverge, it’s a clear signal for human review.

These steps may seem slow, but they prevent cleanup later.

Tools for Ethical Scaling

I don’t believe in an “all-in-one” tool.

Instead, I test combinations:

- AI writing tools for drafting and ideation

- SEO tools for structure and relevance

- Originality and fact-checking tools for verification

- CMS workflows that enforce review stages

The tool matters less than how it’s used inside your workflow.

Dynamic personalization at scale:

One area where AI adds real value in 2025 is content personalization—adjusting examples, CTAs, or supporting sections based on audience segments or intent. When done responsibly, AI can help tailor content without changing core facts or claims, keeping the primary article stable while improving relevance for different users. I treat personalization as a layer on top of reviewed content, not a replacement for it.

For a broader comparison of platforms and pricing, I’ve shared a hands-on breakdown in Best AI Content Writing Tools in 2025, based on real testing and trade-offs.

E-E-A-T at Scale

If you’re new to how E-E-A-T applies specifically to AI-assisted publishing, start with E-E-A-T for AI Content, then come back to this scaling framework.

Ethical AI workflows naturally fit into the E-E-A-T framework:

- Experience comes from human insight

- Expertise is preserved through review

- Authority is strengthened with consistency

- Trust grows through transparency

If you’ve already read our guides on E-E-A-T for AI Content and humanizing AI content, this framework extends those principles into scalable systems.

Mistakes to Avoid When Scaling SEO Content With AI

Even with good tools, avoid:

- Publishing without review

- Using AI for advice

- Treating AI as a replacement for thinking

- Ignoring feedback loops

- Scaling before governance is defined

Scaling magnifies both strengths and weaknesses.

The most common mistake I see? Chasing the lowest cost instead of the right balance.

Yes, heavy automation looks cheaper at first. But actually, it often creates more work later — extra rewrites, inconsistent quality, and content that slowly loses trust. What’s worked best in my experience isn’t the cheapest setup, but the smartest one: spending more human time where the risk is higher, and letting AI move faster where the risks are low. That’s how you keep quality high and still benefit from AI speed.

Where AI Content Scaling Is Headed Next

From what I’m seeing:

- Multi-agent workflows will grow:

By multi-agent workflows, I mean setups where different AI systems handle specific roles—for example, one model for research, another for drafting, and a third for validation—rather than relying on a single tool for everything. When applied properly, this reduces blind spots and makes errors easier to catch, especially when scaling.

- Origin and verification will matter more

- Editorial roles will shift from writing to quality control

- Trust will become a ranking determinant

The future isn’t AI-only or human-only. It’s a well-governed collaboration.

Conclusion: The Only Sustainable Way to Scale Content With AI

Scaling content with AI isn’t the hard part anymore.

But scaling it without losing trust is.

From everything I’ve tested, the winning approach is clear:

- Human-driven strategy

- AI-assisted execution

- Ethical workflows

- Transparent publishing

If you design your workflows this way, AI becomes a multiplier—not a liability.

FAQs:

Can AI content rank if it’s scaled responsibly?

Yes—when quality, accuracy, and E-E-A-T are protected.

Do I need to disclose AI usage?

Transparency builds trust. I recommend clear, simple disclosures.

How much content should be human-reviewed?

Define a Human Review Rate based on risk and topic sensitivity.

Is scaling content with AI a risk to SEO?

It’s risky without governance. With ethical workflows, it’s sustainable.

How long does it realistically take to scale content with AI?

Most teams start to see meaningful progress within several weeks to a few months when they begin with a small pilot. The biggest delays usually come from skipping governance or review setup—not from the AI itself. Once workflows are defined, scaling becomes much smoother.

Do I need expensive AI tools to scale content responsibly?

No. I’ve seen effective workflows built with modest tools and clear processes. What matters more than price is how tools fit into your review and governance system. Expensive tools won’t fix weak workflows.

How big does my team need to be to scale content with AI?

You don’t need a large team. Many setups work well with just one strategist, one editor, and AI support—especially at the start.

As volume grows, strong review roles matter more than simply adding more writers.

Can AI scale content for SEO without causing keyword cannibalization?

Yes—but only if content planning is intentional. Clear topic mapping, defined intent per page, and regular audits prevent overlap. AI helps execution, but humans still need to guide structure and priorities.