Introduction: Why AI-Only Content Workflows Break at Scale

If you’ve experimented with AI for SEO content, you’ve probably felt this tension already.

On one hand, AI makes content faster. Research, outlines, first drafts—suddenly everything moves. On the other hand, something feels off when you try to scale. The content looks fine, but rankings stall. Trust feels thin. And you’re not fully confident hitting “publish.”

I’ve tested AI content workflows across different sites and use cases — including extended testing on a niche affiliate site where I compared fully AI-generated articles against human-reviewed ones. So I noticed this: AI isn’t the problem. Unreviewed AI is.

What I realized early on is that these failures weren’t about AI quality— they were about missing human accountability. The biggest failures I’ve seen didn’t come from using AI. They came from skipping human judgment—especially around accuracy, intent, and experience.

In this article, I’ll walk you through a human-in-the-loop AI content workflow for SEO that scales safely. Not a tool stack. Not hype. A real process that protects trust, EEAT, and long-term search performance.

Search Intent Clarification: What Readers Expect From an AI Content Workflow

When people search for an AI content workflow for SEO, they’re not looking for another list of tools.

Readers want:

- Clear workflow stages (not vague “use AI here” advice)

- Defined human review checkpoints

- Confidence that the process won’t hurt SEO or trust

- Alignment with how Google evaluates quality and usefulness

Where most existing content falls short is depth. Human oversight is mentioned—but rarely explained in a way you can actually implement.

That gap is exactly what this article fills. To deliver on those expectations, we first need to get clear on what an AI content workflow actually means.

What an AI Content Workflow for SEO Actually Means (Clarifying Scope)

Before getting into the process, it helps to clarify terms—because a lot of confusion starts here.

AI-generated content

Content produced primarily by AI systems and published with minimal or no human review or editorial control.

AI-assisted content

AI supports research, structure, or drafting, but humans guide, review, and finalize the content.

Human-in-the-loop workflows

AI is embedded into the workflow, but humans remain accountable at defined checkpoints—especially for strategy, accuracy, and expertise.

What I’ve found is that workflows matter far more than prompts or tools. The same AI output can rank—or fail—depending on how it’s handled.

The safest mindset is this: AI amplifies human judgment. It doesn’t replace it.

The Core Problem: Why Most AI Content Workflows Fail SEO Long-Term

Most broken workflows fail in predictable ways.

Here’s what I’ve consistently seen go wrong across content teams I’ve worked with over the past three years:

- Over-automation removes editorial accountability

- Content becomes generic and interchangeable

- Facts are assumed correct because “the AI said so”

- Experience and expertise signals disappear

- Trust signals Google and users expect never show up

The SEO risk isn’t immediate. That’s what makes it dangerous.

Short-term traffic might hold. But over time, you see:

- Rankings that fluctuate without clear reasons (I saw this with a B2B blog where AI-only articles dropped 15-20 positions after a core update)

- Content that fails to stand out in competitive SERPs

- Reduced trust from users who sense something is “thin” (reflected in higher bounce rates and lower time-on-page)

A lot of fear around AI content comes from misunderstanding Google’s stance, which I broke down clearly in Does Google Penalize AI Content? (What Google Actually Says.)

These failures aren’t algorithm problems. They’re process problems.

The Human-in-the-Loop Principle (Foundation of a Safe Workflow)

The problems above point to a single root cause: removing human accountability at critical decision points. That’s why the solution centers on human-in-the-loop workflows.

Human-in-the-loop AI isn’t about editing commas after the fact.

In content operations, it means humans intervene at the moments that actually affect trust and usefulness.

I break those interventions into three types:

- Strategic: deciding what should be created and why (example: rejecting a content brief that targets the wrong searcher intent, even if keyword volume looks good)

- Editorial: shaping clarity, accuracy, and intent (example: rewriting an AI-generated section that explains a concept correctly but in a way that doesn’t match how the target audience thinks about it)

- Expert validation: confirming claims, nuance, and risk (example: flagging a health-related claim that needs medical review before publishing)

Partial oversight doesn’t work. If humans only review at the very end, most risks are already baked in.

This approach aligns naturally with EEAT because it answers:

- Who is responsible for this content?

- How was it created?

- Why should anyone trust it?

The SEO-Safe AI Content Workflow (Step-by-Step)

This is the workflow I’ve refined over two years of testing different review gates, failure modes, and team structures. It evolved from early failures where I skipped validation steps and paid the price in rankings and rework.

1. Strategy & Content Briefing (Human-Led)

This stage should never be delegated fully to AI.

Here’s what I handle manually:

- Validate search intent and audience need

- Decide the angle and unique value

- Identify topic risk (YMYL vs non-YMYL)

- Define experience requirements (examples, testing, opinions)

AI can assist with research and clustering, but humans must decide what deserves to exist.

This is where people-first thinking matters most, and it’s why I always anchor strategy decisions in the principles I outlined in Trusted AI SEO Foundations. That guide covers the strategic foundation in more depth, but the core idea is simple: decide the value before you draft.

2. Source & Knowledge Validation (Mandatory Human Gate)

Before drafting, I check:

- Are sources credible and recent?

- Do claims appear across multiple authoritative references?

- Is there space to add firsthand insight or proprietary experience?

This step dramatically reduces hallucination later.

AI helps collect sources. Humans decide which ones matter.

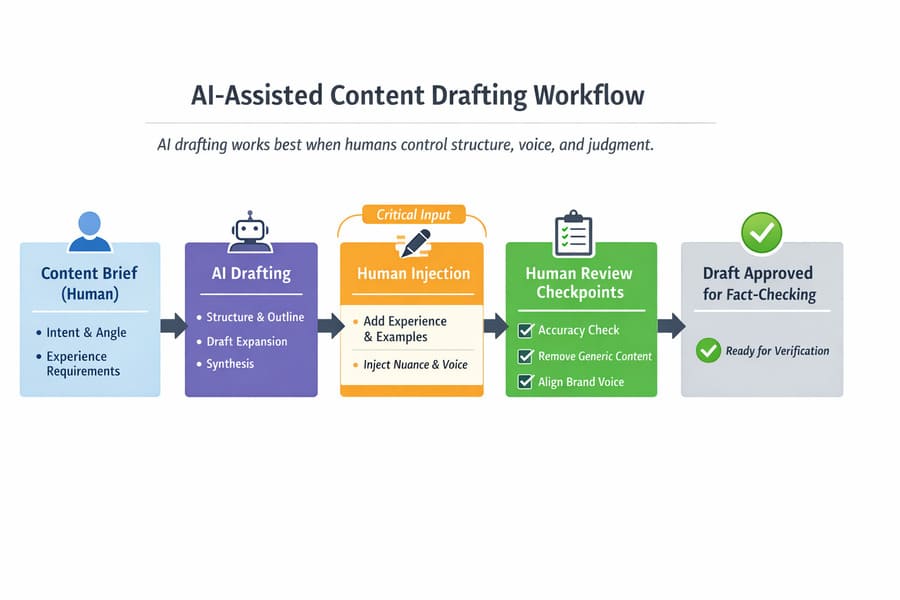

3. AI-Assisted Drafting (Controlled Collaboration)

This is where AI shines—if it’s controlled.

I let AI handle:

- Structure and section flow

- Initial explanations

- Draft expansion

What I always add manually:

- Personal observations (“what I noticed”)

- Examples from real usage

- Nuance AI tends to flatten

- Brand voice and tone

If a section feels generic, that’s my signal to rewrite it—not regenerate it.

This is also where human voice matters most, and I’ve shared practical techniques for that in Humanize AI Content: Practical Steps to Add Expertise & Voice. The key techniques—adding specific examples, using contractions naturally, and weaving in decision-making context—apply directly at this stage.

Once the draft reflects real human judgment, it’s ready for verification — not before.

Here’s how this collaborative drafting stage looks in practice:

4. Fact-Checking & Accuracy Review (Non-Negotiable)

This is the stage most teams skip—and regret later.

What I do here:

- Verify every factual claim

- Double-check statistics and dates

- Escalate uncertain points instead of guessing

- Remove claims that can’t be confidently supported

This step protects both SEO and reputation. No amount of optimization fixes incorrect content.

5. EEAT Enhancement Before Optimization

Before touching SEO polish, I strengthen credibility signals.

That includes:

- Clear author attribution

- Experience indicators (tests, examples, decisions)

- Strong sourcing

- Context explaining why recommendations exist

This order matters. Optimizing thin content doesn’t make it helpful.

If you want a deeper breakdown of how experience, expertise, authority, and trust show up in AI-assisted content, I’ve covered that step-by-step in E-E-A-T for AI Content: How to Maintain Quality. The framework there explains what each signal looks like in practice, which helps at this review stage.

6. SEO & Technical Optimization (AI-Assisted, Human-Reviewed)

Only after content is solid do I move into SEO checks:

- Titles and headings that reflect intent

- Internal links that support the reader journey

- Schema and accessibility checks

- Meta descriptions that match the content—not hype it

AI can suggest optimizations. Humans confirm they make sense.

7. Final Editorial Review (Human Accountability Check)

Before publishing, I ask myself:

- Would I recommend this to someone I trust?

- Does it offer something competitors don’t?

- Is the intent fully satisfied?

If the answer is “almost,” it goes back for revision.

When teams struggle with consistency at this stage, I usually recommend documenting the review questions into a repeatable checklist. I’ve outlined how this review aligns with Google’s E-E-A-T and people-first content expectations in AI Content Review Checklist: What to Check Before You Hit Publish. The checklist there formalizes the key questions into a system anyone on the team can use.

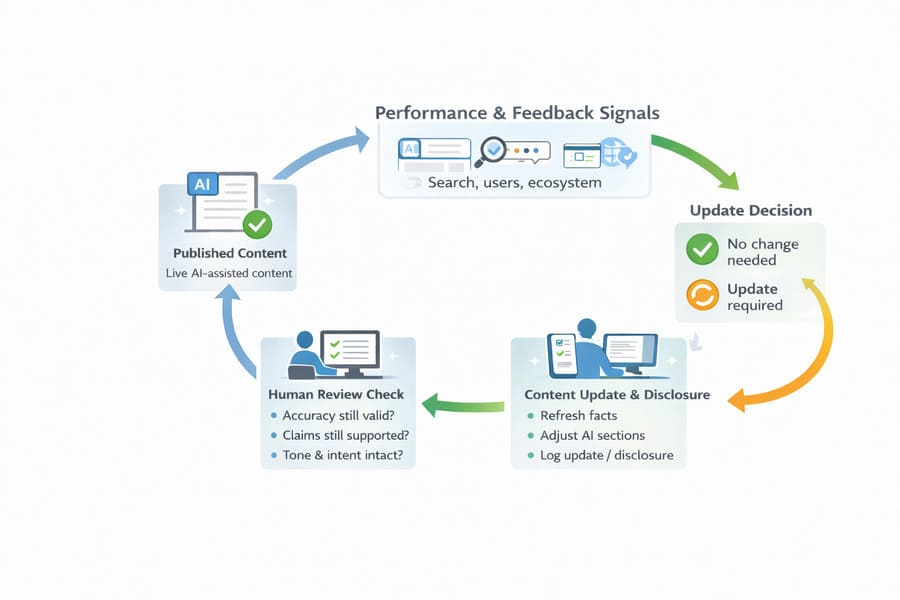

8. Post-Publication Monitoring & Updates

Workflows shouldn’t end at publish.

I monitor:

- Reader feedback and questions

- Accuracy over time

- Ranking changes tied to intent shifts

Corrections and updates aren’t failures—they’re confidence cues.

This ongoing oversight looks like:

Risk Stratification: Not All Content Needs the Same Level of Review

One mistake I see is treating all content the same.

Instead, I classify content by risk:

- Low risk: opinion pieces, non-YMYL blogs

- Medium risk: comparisons, tactical guides

- High risk: finance, health, legal, and reputation topics

Higher risk = deeper human review.

For low-risk content, I might skip expert validation if I’m confident in the sources. For high-risk content, I add subject matter expert review, extended fact-checking against primary sources, and legal/compliance sign-off before publishing. Medium-risk content gets the full workflow as outlined above.

This keeps workflows efficient without cutting corners where it matters.

Ethical AI Content Workflow Safeguards

Ethics isn’t separate from SEO—it affects trust directly.

Key safeguards I built in:

- Bias and misinformation checks

- Clear citation standards

- Transparent AI assistance where readers expect it

- Defined correction processes

When readers trust your process, rankings tend to follow.

How This Workflow Scales Without Sacrificing Quality

The fear is always the same: “This sounds slow.”

In practice, it’s the opposite. After implementing this workflow with a small content team, we cut revision cycles from an average of 2-3 rounds to usually just one. Time-to-publish stayed roughly the same, but quality complaints dropped significantly.

Clear checkpoints:

- Prevent rework

- Reduce errors

- Make delegation easier

- Allow AI to scale the right parts—not the risky ones

Speed without accountability doesn’t scale. Process does. If scaling is your main concern, this workflow builds on the same principles I tested while writing Scale Content With AI: Ethical Workflows That Preserve Trust. That article covers team structure and delegation strategies in more depth.

How This Workflow Aligns With Google’s Quality Expectations

Google doesn’t reward or punish AI. It evaluates content.

Google emphasizes people-first, helpful content over how content is produced, as outlined in Google Search Central — Creating helpful, reliable, people-first content.

This workflow aligns with:

- People-first content principles

- Clear authorship and responsibility

- Helpful, intent-satisfying pages

- Long-term quality signals

AI-assisted content can rank—when humans stay in control.

Common AI Content Workflow Mistakes to Avoid

Over the past three years, working with different content operations, these are the recurring mistakes I’ve seen:

- Publishing AI drafts as-is

- Skipping fact checks

- Optimizing before improving quality

- Treating AI output as authoritative

- Ending the workflow at publish

Avoiding these mistakes alone puts you ahead of most competitors.

When This Workflow Is Overkill (And When It’s Not)

It may be overkill if:

- You’re writing low-stakes internal content

- Accuracy and trust aren’t critical

It’s essential if:

- SEO is a growth channel

- You care about long-term rankings

- Trust, authority, and brand matter

You can adapt the depth—but don’t remove the safeguards.

FAQ

How do you integrate human review checkpoints into AI content workflows?

By defining non-negotiable stages where humans approve strategy, verify facts, and finalize content before publishing.

What are the key stages in an AI-assisted SEO content workflow?

Strategy, source validation, AI drafting, fact-checking, EEAT enhancement, SEO optimization, editorial review, and post-publish monitoring.

What is the risk of using AI content without human oversight?

Inaccuracies, loss of trust, weak EEAT signals, and long-term SEO instability.

How do you fact-check AI-generated content for SEO?

By verifying claims against sources, escalating uncertainty, and removing unsupported statements.

Should you disclose AI use to readers?

When AI assistance is material to creation, and readers would reasonably expect transparency—yes.

Can AI-assisted content still meet Google’s helpful content guidelines?

Yes, when humans remain responsible for quality, accuracy, and intent satisfaction.

Conclusion: AI Scales Output, Humans Protect Trust

AI is a powerful infrastructure. But it isn’t authority.

The workflows that win in SEO don’t chase speed alone. They balance efficiency with accountability. They let AI scale output—while humans protect trust.

If you build your AI content workflow around that principle, you don’t just avoid risk. You build something sustainable.

That’s where long-term SEO growth comes from.