Why E-E-A-T Matters More Than Ever in the AI Content Era

AI has fundamentally changed how content is created. What once required research teams, editors, and weeks of iteration can now be produced in minutes using large language models.

This speed has obvious advantages, but it has also created a visible quality and trust gap across search results.

During competitor analysis and quality audits of AI-assisted pages, a consistent issue appears again and again:

Many articles technically answer the question, but very few feel reliable enough to trust.

They are well-structured.

They are fluent.

They follow SEO patterns.

Yet they often fail to show judgment, accountability, or real-world context.

This is exactly why E-E-A-T for AI Content (Experience, Expertise, Authoritativeness, Trustworthiness) matters more in 2026 than it did in the pre-AI era.

Google’s position has remained consistent:

- AI-generated content is not penalized simply for using AI.

- Content that is thin, misleading, or unhelpful is downgraded, regardless of how it was created.

- This aligns with Google’s official guidance, which emphasizes that content quality and trust signals matter more than whether AI was used, as outlined in Google Search Central’s documentation on AI-generated content.

E-E-A-T functions as a quality evaluation lens, not a publishing checklist

As AI output increases, Google’s quality expectations are reinforced by the December 2025 Core Update, which explicitly tightened expectations for AI-assisted content quality and E-E-A-T across most queries, becoming stricter, not looser.

This guide explains how Google evaluates the quality of AI-assisted content using the E-E-A-T framework.

It focuses on judgment, quality signals, and why AI-written pages fail trust checks, not on building AI workflows or ethics systems.

Summary:

E-E-A-T for AI Content

– Google does not evaluate content based on whether AI was used; it evaluates trust and quality signals.

– Experience, accuracy, authorship clarity, and human review play a critical role in how AI-assisted content is assessed.

– AI content often fails E-E-A-T checks due to overconfidence, lack of context, or missing accountability.

– Small editorial decisions, such as cautious phrasing, experience-based edits, and transparency, significantly improve trustworthiness.

How Google Interprets E-E-A-T in 2026 (AI-Specific Context)

The E-E-A-T framework itself has not structurally changed, but how Google applies it has evolved in an AI-heavy ecosystem.

Google no longer evaluates content based only on correctness or keyword alignment. Instead, it assesses whether a page appears:

- Reviewed

- Intentional

- Accountable

- Created for users rather than systems

AI assistance increases scrutiny because it increases the risk of:

- Overconfident but unverified claims

- Generic explanations without lived context

- Content that sounds authoritative but lacks responsibility

As a result, AI-assisted content that feels automated, even when factually correct, often underperforms.

What matters is not who (or what) wrote the first draft.

What matters is who stands behind the final output.

Experience: The Most Common Failure Point in AI Content

Experience is the E-E-A-T component AI struggles with most, the one Google scrutinizes most closely.

AI systems do not:

- Use tools

- Test workflows

- Make real-world trade-offs

- Learn from practical mistakes

They generate language based on probability, not participation.

What Experience Signals Look Like in AI-Assisted Content

In AI-assisted content, experience is not demonstrated through length or polish, but through signals that show real-world involvement. Google looks for signs that a human has reviewed, questioned, and refined the content based on actual use or editorial judgment. These signals often appear as small clarifications, practical constraints, or honest limitations that AI alone would not introduce. Even brief, experience-based edits can clearly distinguish reviewed content from automated output.

From analyzing low-performing AI pages, the absence of experience typically shows up as:

- Generic advice with no constraints

- No mention of limitations or trade-offs

- No indication that the author has actually done what’s being described

Even small experience signals matter.

For example, during content audits, I often see AI-generated pages explaining complex topics like SEO updates or medical concepts with perfect structure but no real constraints.

One page may confidently describe best practices while ignoring recent changes, edge cases, or risks, making the guidance technically correct but practically unreliable.

In these cases, the issue isn’t grammar or SEO; it’s the absence of lived context and judgment, which causes the content to fail E-E-A-T checks.

Google’s quality systems favor content that includes:

- Brief firsthand observations

- Context about what didn’t work

- Clear limitations or conditions

Experience does not require long case studies. In many cases, a single honest insight adds more trust than an entire unedited AI section.

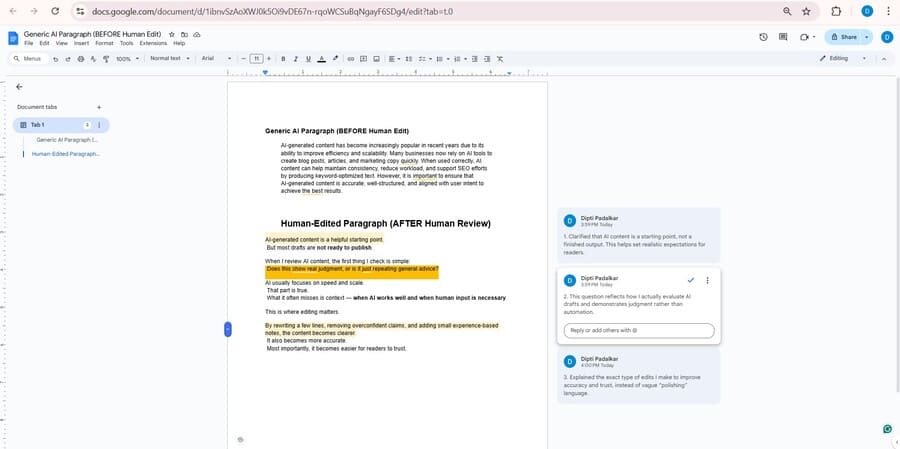

Example: “Feels Automated” vs “Reviewed With Experience”

AI-only draft (feels automated):

“To improve AI content quality, always use clear headings, add keywords naturally, and ensure the content is informative and well structured.”

Human-reviewed version (experience added):

“When reviewing AI drafts, I often find that headings look correct but say nothing useful. In one case, rewriting a generic H2 like ‘Benefits of AI Content’ into ‘Where AI Content Fails Without Human Review’ immediately improved clarity and engagement, without adding more words.”

Why this matters:

The second version signals real editorial judgment, not just best practices. These small, experience-based edits are exactly where AI content gains trust.

Expertise: Judgment Matters More Than Fluency

AI is fluent, but expertise is selective.

How Expertise Shows Up Beyond AI Fluency

Expertise in AI-assisted content is not about how well a topic is explained, but about how selectively and responsibly it is explained. Google looks for evidence that the author understands nuance, limitations, and context; not just definitions or best practices.

True expertise appears when content avoids absolute claims, explains trade-offs, and acknowledges where guidance may not apply universally. These judgment-based decisions are what separate fluent AI output from expert-reviewed content.

One of the most common weaknesses in AI-assisted content is over-explanation without judgment. AI expands everything evenly, even when nuance or restraint would be more appropriate.

Expertise shows up when content:

- Explains why a recommendation may not apply universally

- Identifies edge cases

- Avoids absolute claims when certainty is limited

In AI and SEO topics, especially, surface-level explanations are everywhere. What’s missing is contextual decision-making, something only human expertise can provide.

For a structured breakdown, use the detailed AI content quality E-E-A-T checklist to validate experience, accuracy, and accountability before publishing.

Authoritativeness: Making Accountability Visible

Authoritativeness answers a simple but critical question:

Who is responsible for this information?

With AI-assisted content, unclear accountability weakens trust immediately. Anonymous or generic pages struggle to signal authority, regardless of how polished the writing appears.

Strong authoritativeness signals include:

- A real, identifiable author

- Consistent topical focus across the site

- Alignment with other trusted content

Clear authorship helps both users and search engines connect content to real expertise and responsibility.

Trustworthiness: Accuracy Is Non-Negotiable

Trustworthiness is where AI content is most fragile.

Common issues identified during AI content reviews include:

- Confident but incorrect statements

- Outdated facts presented as current

- Missing context around risks or limitations

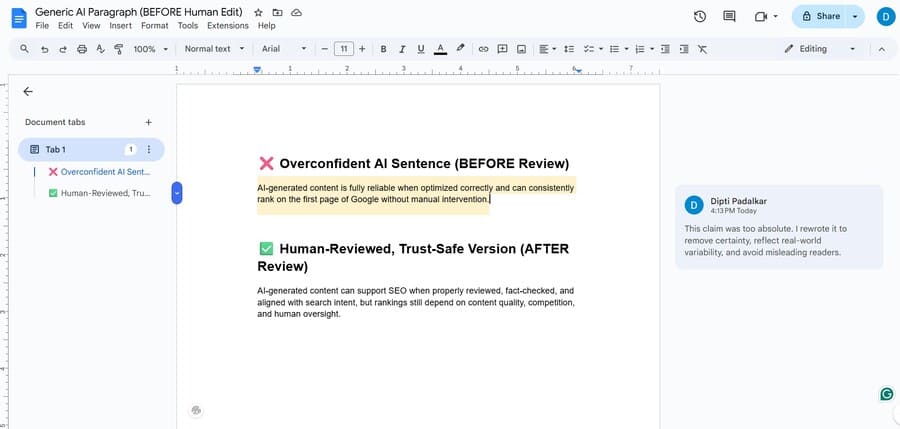

Example: Confidence vs Accuracy in AI Content

AI-generated content often fails trust checks, not because it is obviously wrong, but because it sounds too certain.

Overconfident AI phrasing:

“Google prioritizes AI-generated content that follows E-E-A-T guidelines.”

Reviewed, trust-safe phrasing:

Google does not prioritize AI-generated content itself; it evaluates content based on E-E-A-T signals, regardless of its creation method.

A single clarification like this can prevent an otherwise high-quality page from being perceived as misleading.

Google does not require multiple errors to downgrade trust.

A single misleading section can negatively affect the perception of the entire page.

Strong trust signals include:

- Fact-checking key claims

- Using cautious language where uncertainty exists

- Making review and responsibility visible

While this guide explains how trust is evaluated in AI-assisted content, applying those trust signals consistently requires clear ethics, human oversight, and workflow safeguards.

Where Human Review Is Non-Negotiable for AI Content

Human review becomes essential when AI-generated content can directly affect decisions, outcomes, or user safety.

In finance-related topics, even small inaccuracies or oversimplified explanations can mislead readers making money-related decisions.

Health and medical content requires careful review to avoid unsafe advice, missing disclaimers, or outdated information that could cause harm.

Legal content demands precision and clear boundaries, since AI models often present generalized guidance without accounting for jurisdictional differences.

Product comparisons and recommendations also require human judgment to validate claims, avoid bias, and reflect real-world usage.

Finally, any content that makes claims about outcomes, performance, or results must be reviewed to ensure accuracy, appropriate caution, and transparency.

Why Long AI Content Still Fails Quality Checks

Length alone does not guarantee trust.

Many long AI articles fail because they:

- Repeat the same idea using different wording

- Over-optimize keywords

- Expand sections without adding insight

From a quality perspective, Google evaluates usefulness and intent alignment, not word count. Long content without judgment or originality often performs worse than shorter, well-reviewed pages.

This is why human review and expertise still matter in AI-generated content.

Common E-E-A-T Failure Patterns in AI Pages

Across multiple SERP analyses, low-quality AI pages tend to fail for the same structural reasons:

- Generic explanations without original context

- No visible author responsibility or accountability

- Overconfident tone unsupported by evidence

- Repetition of ideas instead of added insight

To systematically detect these structural weaknesses, follow a formal AI content audit process before publishing or scaling AI-assisted pages.

These failures are structural, not technical. They occur when AI output is treated as final content rather than a starting point.

E-E-A-T Risk Zones for AI Content

Not all topics carry the same trust expectations.

Higher-risk (YMYL) topics include:

- Finance

- Health

- Legal

- Safety

In these areas, Google expects:

- Stronger experience and expertise signals

- Conservative, precise language

- Clear accountability

Lower-risk informational content still requires accuracy, but tolerance for ambiguity is lower than it was before widespread AI use.

Higher-risk topics demand more than just quality awareness; they require structured review processes and responsible AI use. For a system-level approach covering governance, safety, and human-in-the-loop workflows, refer to the Trusted AI SEO framework.

Ethical Use of AI Content (Quality Evaluation Perspective)

Ethics and E-E-A-T are closely connected, but in this guide, ethics matter only as a quality evaluation signal, not as an operational framework.

From a quality standpoint, unethical AI usage typically appears as:

- Unverifiable claims

- Hidden responsibility

- Speed prioritized over accuracy

Transparency strengthens trust because it reinforces intent and accountability.

Frequently Asked Questions

How does Google assess trust and quality signals in AI-assisted content?

Google does not evaluate content based on whether AI was used. Instead, it assesses quality signals such as experience, accuracy, authorship clarity, and trustworthiness.

Does Google directly score E-E-A-T?

No. Google does not assign a numeric E-E-A-T score

What does E-E-A-T mean for AI content writers?

For AI content writers, E-E-A-T means AI drafts must be reviewed, edited, and validated by a human. Writers are responsible for adding experience, correcting overconfident claims, and ensuring accuracy; AI can assist, but accountability stays human.

Can short AI content meet E-E-A-T standards?

Yes. Length does not determine quality. Short AI-assisted content can meet E-E-A-T expectations if it is accurate, reviewed, and aligned with intent.

Do images improve E-E-A-T?

Images alone do not increase E-E-A-T, but original visuals can support experience and trust when showing real-world use.

Is AI disclosure required for E-E-A-T?

Google does not require disclosure, but transparency can strengthen accountability and user trust.

Final Takeaway

AI can accelerate content creation.

E‑E‑A‑T for AI content determines whether that content deserves trust.

When AI is used as an assistant, and human experience, expertise, and responsibility remain central, content naturally aligns with what Google rewards in 2026.