Introduction

If you run SEO for clients or manage content at scale, AI feels like a relief at first.

Drafts come fast. Writers move quicker. Topic coverage improves. On paper, everything looks efficient. But after auditing AI-assisted content across multiple client sites and industries, a pattern emerged: the weakest part of AI content isn’t writing; it’s accountability.

In agency work, this shows up subtly. Rankings plateau instead of growing. Clients ask for proof behind claims. Pages feel “correct” but not convincing.

When we audit deeper, the issue usually isn’t optimization. It’s that no one truly verified what the AI produced.

That’s why this guide exists.

I’ll walk you through how I audit AI-generated content in real SEO and agency environments, using a structured, human-led process that protects accuracy, E-E-A-T, and long-term trust. This isn’t about avoiding penalties. It’s about publishing content you can defend, even under scrutiny.

Why AI Content Needs a Separate Audit Process (Context + Positioning)

Traditional SEO reviews assume human intent. AI breaks that assumption.

In client audits, I’ve seen AI-generated pages that:

- Rank well initially, but fail to convert

- Answer the query, but miss important context

- Contains no outright lies, yet still misleads

The issue is not malicious intent. It’s probabilistic output. AI predicts text. It doesn’t verify truth.

For agencies, this creates a new responsibility layer. When content is produced faster than it can be reviewed, risk increases quietly. That’s why AI-assisted content needs a dedicated audit process, separate from standard on-page checks.

This article supports your broader E-E-A-T strategy by focusing on post-draft verification. Where the pillar article explains why E-E-A-T matters, this guide shows how to enforce it operationally, page by page, client by client.

In practice, I recommend a formal AI content audit whenever:

- Content is produced programmatically or semi-scaled

- Pages target commercial or advice-driven queries

- Multiple writers or tools contribute

- Client trust and authority matter more than speed

Skipping this step doesn’t save time. It just delays problems.

What “Audit AI-Generated Content” Actually Means (and What It Doesn’t)

I want to be very clear here, because this is where most teams go wrong.

To audit AI-generated content does not mean:

- Running it through AI detectors

- Trying to “humanize” text blindly

- Hiding AI involvement

I’ve audited pages that passed detectors and still failed basic factual checks. Detectors don’t measure accuracy, experience, or trust. Editors do.

In SEO and agency workflows, an audit means:

- Verifying claims before users or Google questions them

- Checking whether E-E-A-T signals are demonstrated, not implied

- Assigning ownership for what’s published

Once you treat AI content like a junior writer’s draft, useful but untrusted until reviewed. The process becomes logical instead of stressful.

Step 1: Inventory & Risk Prioritization (Before You Review Anything)

One of the most common agency mistakes is auditing content randomly.

Instead, I always begin with inventory and risk prioritization.

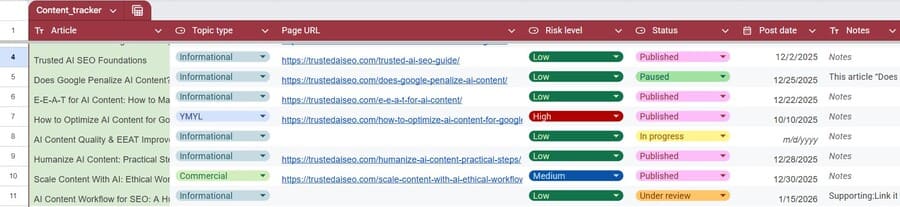

In real projects, I group AI-assisted pages into three tiers:

High risk

- YMYL or advisory content

- Legal, health, finance-adjacent topics

- Pages clients actively promote

Medium risk

- Service pages

- Comparison guides

- Authority-building blog posts

Low risk

- Long-tail informational blogs

- Supporting content with minimal impact

For each page, I document:

- Traffic and conversion importance

- Topic sensitivity

- Assigned author and reviewer

- Last verified date

In practice, this step helps senior reviewers focus on high-impact pages instead of spending equal time on low-risk content.

Step 2: Accuracy & Fact Verification (The Highest-Failure Area)

This is where AI content fails most often and least visibly.

In client audits, I rarely see dramatic hallucinations. Instead, I see statistics that were accurate two years ago, simplified definitions missing critical conditions, and advice stated without context or limitations.

That’s why I use claim-level verification, not paragraph review.

Here’s what that looks like in practice:

I highlight every factual, numerical, or advisory claim and ask:

- Could this be challenged by an informed reader?

- Would I defend this in front of a client?

If the answer isn’t a clear yes, the claim gets traced to a source.

I follow a strict source hierarchy:

- Primary sources (official docs, research, regulations)

- Secondary sources (reputable industry analysis)

- Tertiary summaries only for background

Google has clarified that AI-assisted content is acceptable as long as it meets quality standards outlined in Google’s guidance on AI-generated content.

In one audit for a SaaS client, an AI draft cited “industry benchmarks” without sources. Nothing was technically wrong, but nothing was verifiable either. We rewrote those sections conservatively and added citations so the client could confidently stand behind the claims during internal and legal review.

If a claim can’t be verified, I do one of three things:

- Rewrite it with explicit uncertainty

- Replace it with sourced information

- Remove it entirely

Step 3: E-E-A-T Alignment Audit (Applied, Not Theoretical)

E-E-A-T isn’t something you sprinkle on content. It’s something you validate.

In agency audits, this step often reveals the biggest gaps, especially on AI-assisted pages that “sound right” but lack substance.

Here’s how I apply E-E-A-T practically:

Experience

For experience, I look for signs the author has actually done the work. For example, if a section says “set up your dashboard,” I check whether it mentions setup friction, edge cases, or common errors. If it reads like a clean summary with no friction, it’s usually an AI abstraction.

Expertise

For expertise, I pressure-test explanations by asking follow-up questions. If removing one paragraph breaks the logic of the section, that’s a good sign. If nothing changes, the content is likely surface-level.

Authoritativeness

For authoritativeness, I check whether the author is a logical source for the advice. A generic byline on a compliance or health topic is a red flag, even if the content itself sounds correct.

Trust

For trust, I look at whether the page is defensible. Are sources clear? Is authorship real? Could the client explain how this content was reviewed if questioned?

In one audit, we removed an entire “Top Best Practices” section because none of the recommendations reflected the author’s actual process—they were aggregated summaries without ownership.

This is where applied EEAT validation matters, especially in AI-assisted workflows grounded in a practical trusted AI SEO guide rather than theoretical guidelines.

Step 4: Trust & Transparency Checks (Where Most Sites Fail)

This is the quietest failure point and the most damaging.

Many sites technically “do SEO right” but still feel untrustworthy. When I audit why, it usually comes down to missing transparency.

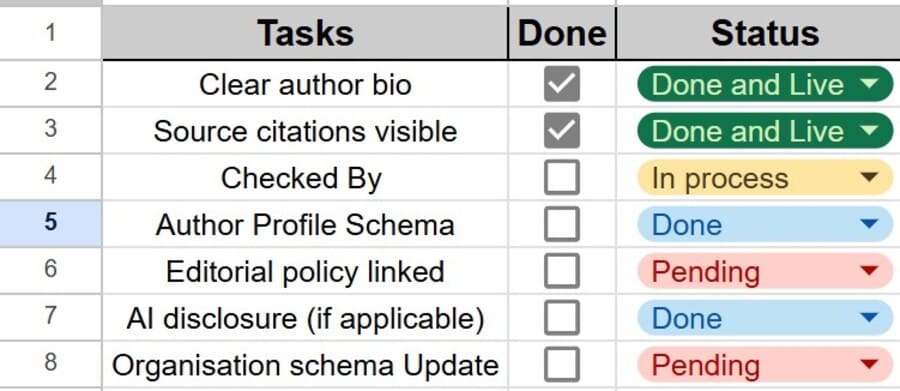

I check:

- Whether AI involvement should be disclosed for the topic

- Whether editorial policies are visible

- Whether authors are real and verifiable

Patterns I remove immediately:

- Generic author bios

- Anonymous advice content

- Overconfident tone without sourcing

In agency contexts, transparency protects both you and the client. It reduces disputes and builds confidence when rankings fluctuate.

Step 5: Editorial Accountability & Audit Trails

If a page fails and no one knows who reviewed it, the system failed, but not the content.

In every AI content audit I run, I enforce basic accountability:

- Named reviewer

- Review date

- Notes on major changes

For higher-risk pages, I also document:

- Source disputes

- Update schedules

- Escalation decisions

Audit trails made it clear who reviewed what and why, which reassured clients during reviews and ranking fluctuations.

This accountability layer fits naturally into a broader human-in-the-loop AI content workflow, where AI drafts are reviewed, verified, and signed off before publication.

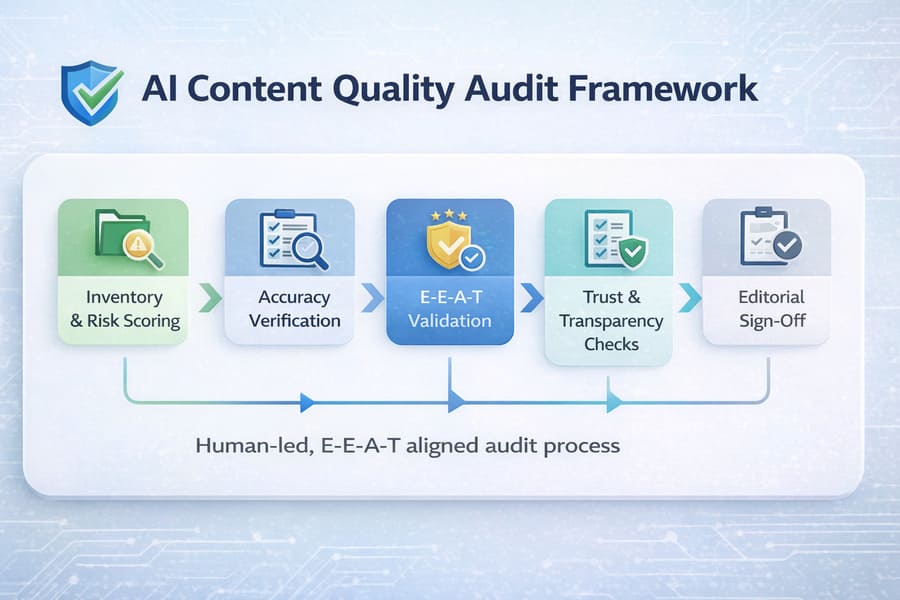

A Practical AI Content Quality Audit Framework (End-to-End)

Here’s the full framework as used in real SEO operations:

- Inventory and risk scoring

- Claim-level accuracy checks

- E-E-A-T validation

- Trust and transparency review

- Editorial sign-off

Automation supports detection and crawling. Humans handle judgment.

This framework exists to prevent a common agency failure mode: publishing quickly, then spending weeks correcting avoidable errors after launch.

E-E-A-T Audit Checklist (Publisher-Ready)

Once the audit framework is in place, a practical E-E-A-T audit checklist answers one question:

Can this page stand up to scrutiny six months from now?

I use tiered checklists:

- One for general SEO content

- One for high-risk or advisory pages

Each audit ends in one of three decisions:

- Approved

- Needs revision

- Removed

Acceptable AI use after audit means:

- AI-assisted drafting or structure

- Humans verified facts and intent

- Accountability is clear

For teams that want a more detailed breakdown of review criteria, refer to the structured AI content review checklist used to validate AI-assisted pages before publication.

Common AI Content Audit Mistakes to Avoid

Across agency audits, the same mistakes repeat:

- Auditing for AI detectors instead of readers. I’ve seen teams rewrite clear, accurate sentences just to lower detector scores while leaving unsourced claims untouched—which completely misses the real risk.

- Treating E-E-A-T like a checklist, not evidence

- Over-automating judgment-heavy tasks

- Never re-auditing content post-publication

Avoiding these alone puts you ahead of most SEO teams using AI.

FAQs

Does Google penalize AI-generated content?

No. Google evaluates quality and intent, not how content is produced.

How do you audit AI content for accuracy?

By confirming claims with trustworthy sources and removing statements that can’t be confidently backed up

Can AI-written content meet E-E-A-T standards?

Yes, when humans provide experience, verification, and accountability.

Do I need to disclose AI usage to readers?

Only when it affects trust or user expectations.

How This Audit Process Supports Long-Term Trust

AI accelerates content creation. Audits protect credibility.

When you audit AI-generated content properly, you’re not slowing SEO; you’re stabilizing it. When you verify before publishing, you avoid the expensive cycle agencies fall into: launch fast, fix later, and lose trust along the way.

AI can draft. Humans must decide what’s true. That’s how AI content earns trust at scale.

If your site has experienced ranking instability or traffic decline after scaling AI content, consider a structured Google traffic drop recovery consultation to diagnose the root cause and protect long-term visibility.